Latency Intolerance

Several things occurred almost simultaneously over the last week or so that spurred my thinking for this latest blog post.

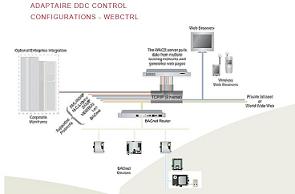

The diagram at the left illustrates the network configuration for our DDC control system called Adaptaire. We have launched a project to add some additional functionality to the software that has been around for almost 12 years now. The function that we are adding is a real time "dashboard" that will give the building occupant feedback on how well the system is performing. Because the "dashboard" is displaying information in real time, and might be transmitting that data over the Internet, one of the factors that we need to consider is the speed at which we "refresh" the data.

The second thing that spurred our thinking is our plan to migrate our current product selection software from a desktop environment to a web-based environment. This means that the data entered and the answers received back from the selection software will be transmitted via the Internet. Experiments with some very early versions of the software have highlighted "speed of response" issues that must be addressed as we move forward.

The third event that provoked this blog entry was a meeting with one of the chief design engineers for Integrated Design Group, a data center design firm responsible for projects worldwide. In the course of the discussion this engineer made a prediction that the data center industry will move to more, and smaller, data centers.

The common thread in all of these items is the "need for speed" and that translates into reducing something called "latency". "Latency" is the time it takes for your input, or our dashboard refresh signal, to actually reach its final destination. As incredible as it might seem, even though these signals are moving down fiber-optic cables at the speed of light, it can take a "long" time for that signal to reach its end point. First, remember that "long" in this industry is measured in nanoseconds. Second, remember that most of the signals that are transmitted are actually transmitted at least twice because of error checking. In addition, most of the time those signals pass through several routers and servers before reaching their destination and each of those "hops" also includes error checking and traffic control delays.

One solution, and the reason this engineer believes the future of the industry is more, and smaller, data centers is to locate the data centers as close as possible to the source of the signal or destination of the signal. This will reduce the number of "hops" and also reduce the physical distance between points...thus reducing "latency".

The "latency" issue is so important in industries like equity trading that data center companies are battling each other to locate their facility just one block closer to the Wall Street trading floor.

We are all becoming spoiled by the speed at which we can find information via the Internet, download music, watch on-line videos, etc....but the end result is that we are acquiring a new "disease" that I am calling "Latency Intolerance".